- Why small language models are becoming the AI industry standard in 2026

- How SLMs match large models in accuracy while cutting costs dramatically

- The connection between SLMs and the edge computing revolution

- Real-world business applications proving SLM’s superiority for specific tasks

- AT&T and major enterprises are adopting SLMs over traditional large models

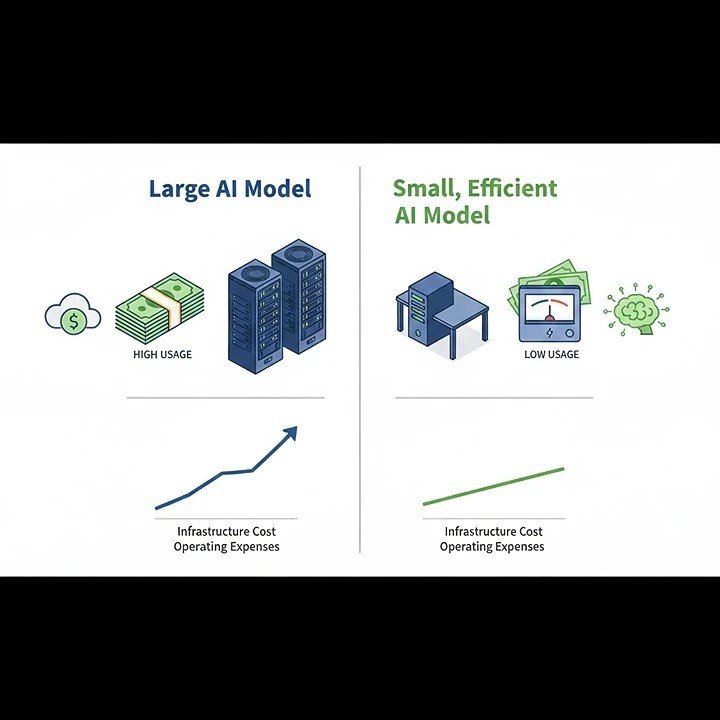

- Cost comparison: SLMs versus expensive large language model infrastructure

- Implementation strategies for businesses transitioning to small models

Small Language Models (SLMs) represent 2026’s biggest AI shift. These compact, specialized models match large models in accuracy for specific tasks. But they cost significantly less and run faster. Major enterprises like AT&T are adopting SLMs because fine-tuned versions outperform generalized large models. They also enable edge computing deployment. This makes AI practical for businesses without massive budgets.

The AI Industry’s Surprising Turn

Something unexpected is happening in artificial intelligence. Surprisingly, the race for bigger models is slowing down. Instead, companies are choosing smaller, smarter alternatives.

This shift challenges conventional wisdom. Previously, bigger was supposed to be better. Additionally, more parameters meant superior performance. However, that narrative is changing fast.

Notably, Small Language Models are proving that size doesn’t guarantee success. In fact, focused capability beats general knowledge. Moreover, efficiency trumps raw computing power. As a result, this realization is transforming enterprise AI strategies.

What Are Small Language Models?

Essentially, Small Language Models contain fewer parameters than giants. Specifically, they’re trained for particular tasks. As a result, this specialization creates surprising advantages.

Think of SLMs as expert specialists. In contrast, large models are generalists, knowing everything superficially. However, SLMs know specific domains deeply. Therefore, this focus delivers better results where it matters.

The technical difference is significant. For example, large models might have billions of parameters. Meanwhile, SLMs operate with millions or low billions. Nevertheless, performance often matches or exceeds larger counterparts.

Why AT&T Chose Small Over Large

Essentially, Small Language Models contain fewer parameters than giants. Specifically, they’re trained for particular tasks. As a result, this specialization creates surprising advantages.

Think of SLMs as expert specialists. In contrast, large models are generalists, knowing everything superficially. However, SLMs know specific domains deeply. Therefore, this focus delivers better results where it matters.

The technical difference is significant. For example, large models might have billions of parameters. Meanwhile, SLMs operate with millions or low billions. Nevertheless, performance often matches or exceeds larger counterparts.

The Cost Revolution

Primarily, financial considerations drive much SLM adoption. Traditionally, large language models require expensive infrastructure. Additionally, training costs reach millions of dollars. Furthermore, operating expenses never stop climbing.

Fortunately, SLMs change this equation dramatically. First, training costs drop by orders of magnitude. Second, infrastructure requirements shrink significantly. Finally, energy consumption decreases substantially.

Therefore, smaller companies can now afford AI. Previously, only tech giants accessed cutting-edge capabilities. However, SLMs democratize artificial intelligence access. As a result, this levels the competitive playing field.

Moreover, cost savings extend beyond initial investment. Subsequently, ongoing operational expenses remain low. Additionally, companies avoid perpetual cloud computing charges. Consequently, local deployment becomes financially viable.

Performance That Surprises Skeptics

Initially, early SLM skeptics questioned their capabilities. Naturally, they wondered how smaller models could compete. Interestingly, the answer lies in specialization.

For instance, French AI startup Mistral demonstrated this convincingly. Specifically, their small models outperform larger ones after fine-tuning. Moreover, specific benchmarks show clear advantages. In fact, general knowledge matters less than focused expertise.

Similarly, Jon Knisley from ABBYY explained the principle. Essentially, efficiency, cost-effectiveness, and adaptability define SLMs. Therefore, these qualities make them ideal for precision applications. Clearly, tailored solutions beat one-size-fits-all approaches.

Importantly, real-world results validate these claims. For example, businesses report excellent accuracy rates. Additionally, response times improve noticeably. Furthermore, user satisfaction increases measurably.

The Edge Computing Connection

Naturally, edge computing and SLMs form natural partnerships. Indeed, both emphasize local processing over cloud dependence. As a result, this combination creates powerful possibilities.

However, edge devices have limited computational resources. Obviously, large models simply won’t run there. Fortunately, SLMs fit perfectly within these constraints. Therefore, this enables AI where it wasn’t possible before.

For example, Matt White from PyTorch Foundation predicted this trend. Specifically, domain-optimized smaller models are becoming central. Additionally, advances in distillation and quantization help. Furthermore, memory-efficient runtimes push inference to edge clusters.

Importantly, the benefits are substantial. First, latency drops to near-zero levels. Second, privacy improves through local processing. Finally, data sovereignty concerns decrease significantly.

Real-World Business Applications

Currently, specific industries are embracing SLMs enthusiastically. Notably, their use cases demonstrate practical value. Therefore, let’s examine several examples.

Healthcare Applications

Clearly, medical diagnosis requires specialized knowledge. Unfortunately, general AI models lack this depth. However, fine-tuned SLMs excel at specific conditions.

Importantly, they analyze patient data locally. Therefore, privacy regulations are satisfied easily. Moreover, diagnostic accuracy matches that of specialist doctors. Additionally, response times enable real-time clinical decisions.

Financial Services

Similarly, banks need precise fraud detection. Obviously, false positives cost money. Meanwhile, false negatives risk everything. Consequently, SLMs trained on transaction patterns work better.

Furthermore, they process data on local servers. As a result, regulatory compliance becomes simpler. Additionally, detection rates improve measurably. Finally, customer experience gets better.

Manufacturing Quality Control

Likewise, production lines generate massive data streams. However, analyzing everything centrally creates delays. Fortunately, edge-deployed SLMs solve this problem.

Specifically, they identify defects instantly. Therefore, production continues without interruption. Moreover, quality improves while costs decrease. Consequently, waste reduction saves significant money.

Retail Personalization

Similarly, stores want tailored customer experiences. However, sending data to clouds raises privacy concerns. Fortunately, local SLMs provide personalization without exposure.

Specifically, they analyze shopping patterns locally. Therefore, recommendations happen in real-time. Additionally, customer trust increases substantially. As a result, sales conversion rates improve.

The Fine-Tuning Advantage

Initially, raw SLMs perform adequately. However, fine-tuned SLMs perform exceptionally. Clearly, this difference matters enormously.

Essentially, fine-tuning adapts models to specific needs. First, companies provide relevant training data. Then, the model learns particular patterns. Finally, performance improves dramatically for target applications.

Therefore, enterprises invest in fine-tuning expertise. Consequently, data scientists become crucial assets. Additionally, domain knowledge guides training processes. Ultimately, results justify the investment consistently.

Notably, ABBYY emphasizes this point strongly. Specifically, precision becomes paramount in enterprise applications. Moreover, fine-tuned SLMs deliver the required accuracy. In contrast, generic large models cannot match this specificity.

Comparing SLMs to Large Models

Direct comparisons reveal interesting patterns. Neither approach wins universally. Context determines the better choice.

When SLMs Excel

Specific, well-defined tasks favor SLMs. Limited computational resources require them. Privacy-sensitive applications benefit greatly. Cost-conscious projects prefer them strongly.

Edge deployment absolutely demands SLMs. Real-time response needs encourage them. Domain-specific expertise requirements suit them. Regulatory compliance often necessitates them.

When Large Models Win

Broad general knowledge tasks need large models. Creative content generation often requires them. Multi-domain reasoning favors their breadth. Research applications benefit from wide knowledge.

However, even these advantages are shrinking. SLMs are improving rapidly. Combination approaches are emerging. Hybrid solutions offer best-of-both-worlds benefits.

Implementation Strategy for Businesses

Organizations considering SLMs should follow structured approaches. Random experimentation wastes resources. Strategic planning maximizes returns.

Step One: Identify Use Cases

Determine specific problems needing solutions. Evaluate current AI applications critically. Identify where specialization beats generalization.

Look for repetitive, well-defined tasks. Consider privacy-sensitive operations carefully. Examine edge computing opportunities thoroughly.

Step Two: Assess Infrastructure

Evaluate existing computational resources honestly. Determine deployment location requirements. Consider data storage and access needs.

Edge deployment requires different planning. Local servers need adequate specifications. Network connectivity impacts some applications.

Step Three: Data Preparation

Gather relevant training data systematically. Ensure quality and representativeness carefully. Clean and label information thoroughly.

Fine-tuning requires good data. Quality matters more than quantity. Domain expertise guides data selection.

Step Four: Model Selection

Choose an appropriate base SLM carefully. Consider parameter count thoughtfully. Evaluate licensing terms thoroughly.

Open-source options provide flexibility. Proprietary models offer support guarantees. Match selection to organizational needs.

Step Five: Fine-Tuning Process

Work with experienced data scientists. Provide clear performance metrics. Iterate based on results systematically.

Testing happens in controlled environments. Production deployment follows successful validation. Monitoring continues indefinitely afterward.

The Three Forces Shaping SLMs

PyTorch Foundation identifies three defining trends. These forces will shape SLM development.

First, global model diversification is accelerating. Chinese multilingual releases lead innovation. Reasoning-tuned models are proliferating. Competition drives rapid improvement.

Second, interoperability becomes a competitive advantage. Frameworks align around shared standards. Runtimes achieve better compatibility. Integration gets easier continuously.

Third, governance is hardening appropriately. Security-audited releases are standard. Transparent data pipelines build trust. Compliance becomes a built-in feature.

Looking Ahead to 2027

Undoubtedly, current trends will likely accelerate. Moreover, SLM adoption will keep growing. Additionally, new use cases will emerge regularly.

Therefore, businesses should prepare strategically. Furthermore, investment in SLM expertise pays off. Notably, early adopters gain competitive advantages. Conversely, laggards face increasing disadvantages.

Meanwhile, the technology will keep improving. Specifically, models will become even more efficient. Additionally, fine-tuning techniques will advance further. Finally, edge deployment will expand significantly.

Frequently Asked Questions

Q1: What makes Small Language Models different from large ones?

SLMs contain fewer parameters than large models. They’re specifically trained for particular tasks. This creates focused expertise rather than broad knowledge.

Large models might have billions of parameters. SLMs operate with millions or low billions. Yet they often match performance for specific applications.

The key difference is specialization versus generalization. SLMs know specific domains deeply. Large models know everything superficially. Purpose determines which approach works better.

Q2: How much money can businesses save using SLMs?

Cost savings are substantial and multi-layered. Training costs drop by 80-90% compared to large models. Infrastructure requirements shrink dramatically, too.

AT&T reports SLMs are “superb in cost and speed.” Operating expenses remain low continuously. Energy consumption decreases significantly also.

Small companies can now afford AI capabilities. Previously, only tech giants accessed this technology. SLMs democratize AI for all businesses. ROI improves measurably across implementations.

Q3: Can SLMs really match large model accuracy?

Yes, but only for specific applications. Fine-tuned SLMs match large models on target tasks. Sometimes they even exceed the performance.

Mistral AI demonstrated this convincingly. Their small models outperform larger ones after fine-tuning. ABBYY confirms SLMs excel where precision matters.

However, general knowledge tasks still favor large models. Creative content generation requires broader capabilities. The key is matching the model to the task. Choose based on specific requirements.

Q4: What industries benefit most from SLMs?

Healthcare benefits enormously from specialized models. Financial services need precise fraud detection. Manufacturing requires real-time quality control.

Retail uses SLMs for personalized experiences. Telecommunications deploys them for network optimization. Any industry with specific, repetitive tasks benefits.

Edge computing applications particularly favor SLMs. Privacy-sensitive operations work better with them. Regulatory compliance becomes easier using SLMs. Cost-conscious industries prefer them strongly.

Q5: How do I start implementing SLMs in my business?

Start by identifying specific use cases. Evaluate which tasks need specialized AI. Look for repetitive, well-defined problems first.

Assess your current infrastructure capabilities honestly. Gather relevant training data systematically. Choose an appropriate base SLM carefully.

Work with experienced data scientists for fine-tuning. Test thoroughly before production deployment. Monitor performance continuously after launch. Scale successful implementations gradually across operations.